MultiSense

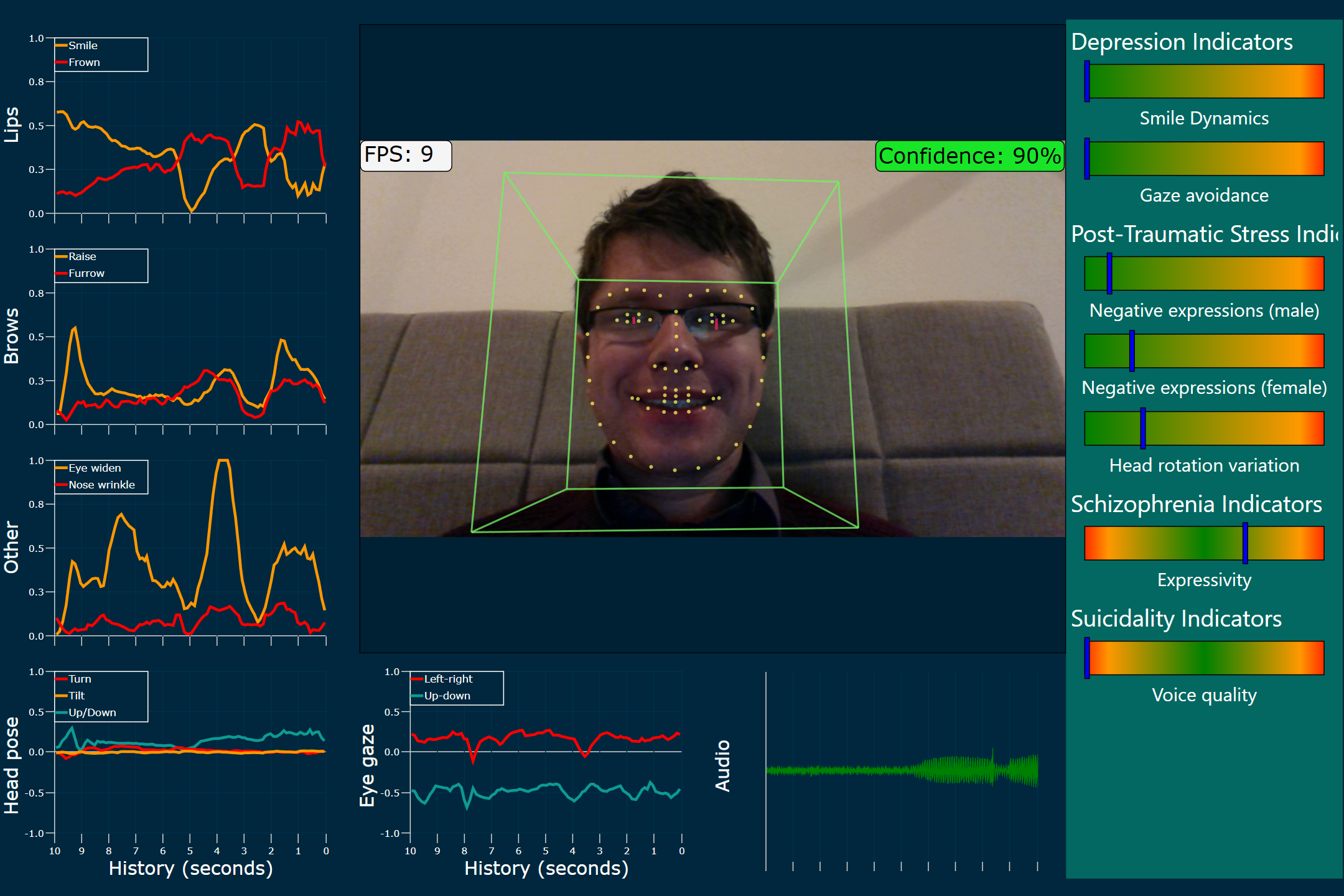

Realtime demo of MultiSense at The White House Frontiers Conference

When President Obama visited Carnegie Mellon University for The White House Frontiers Conference in the Fall of 2016, a few select research groups were invited to demonstrate their research to President Obama. The Multicomp Lab that I was a part of was one of these groups. Our demo consisted of the platform MultiSense that senses, perceives, and analyses multimodal social human behaviour in realtime.

My primarily responsibility as a Senior Research Engineer had been the design and development MultiSense. The facial behaviour component of Multisense was handled by the awesome OpenFace framework, that was also being developed within the group, while we used COVAREP for speech feature extraction for the live demo.

While we had a functioning build of Multisense, I redesigned the interface specifically for the demo at the Frontiers Conference. I sketched the wireframe using Paper, while my colleague Tadas incorporated the design into the interface built using the Windows Presentation Foundation.